A U.S. court has issued a historic ruling against Meta (formerly Facebook) and Google, accusing them of designing platforms that exacerbate mental health issues among youth. This case is part of a broader wave of lawsuits targeting major tech companies, potentially leading to significant changes in social media regulation.

The case, filed in New Mexico and California, presented evidence suggesting that the design of platforms like Instagram and YouTube contains fundamental flaws that negatively affect users, particularly teenagers. Testimonies revealed that these companies were aware of the problems associated with their platform designs yet continued to roll out new features that could worsen psychological issues such as anxiety and depression.

Details of the Case

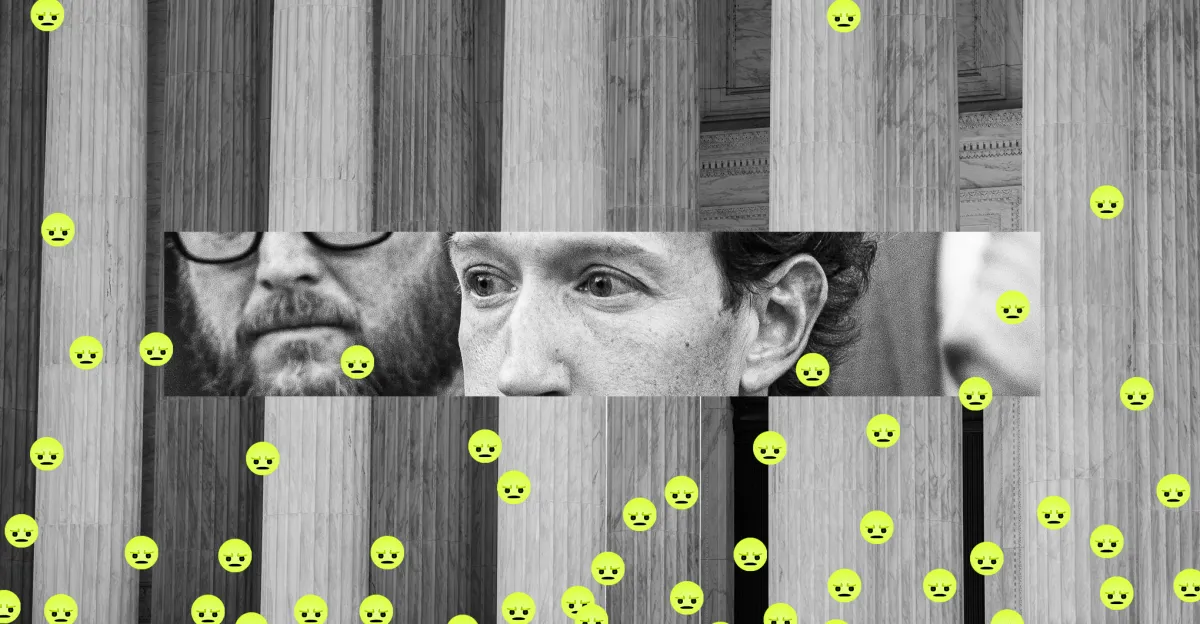

These lawsuits are part of a new wave targeting major tech companies, representing a legal test of how responsible these companies are for their impacts on users' mental health. In a Los Angeles court, several executives from the companies, including Mark Zuckerberg, were present to testify. The defendants argued that these lawsuits pose a threat to freedom of speech, as moderating platform content could conflict with the First Amendment of the U.S. Constitution.

The evidence presented in court showed that features like infinite scrolling and algorithmic recommendations may contribute to addiction to these platforms, exacerbating mental health issues. Testimonies from former Meta employees discussed design decisions made despite awareness of potential risks.

Background & Context

Historically, major tech companies have enjoyed legal protection under Section 230 of the Communications Decency Act, which shields them from liability for user-generated content. However, these lawsuits represent a turning point, potentially leading to changes in how these companies are regulated. In recent years, concerns about the impact of social media on mental health, especially among youth, have increased, prompting lawmakers to consider amending existing laws.

These cases are part of a broader discussion about how to regulate social media and its impact on society. Amid growing concerns about mental health, these lawsuits may mark the beginning of legal changes aimed at protecting users, particularly children and teenagers, from potential risks.

Impact & Consequences

These rulings could lead to significant changes in how social media platforms operate. If these rulings are upheld on appeal, they may open the door to more lawsuits against tech companies, potentially resulting in changes to platform designs and usage methods. Companies may be forced to reassess their features to ensure user safety, which could impact their business models.

Furthermore, these cases could lead to changes in laws related to protecting children and teenagers online. New legislation may be introduced to regulate how these platforms are designed, requiring companies to take additional steps to protect users from harmful content.

Regional Significance

In the Arab region, these cases could serve as a model for addressing the impact of social media on mental health. With increasing social media use among Arab youth, there may be an urgent need to develop policies that protect users from potential risks. These cases could spark discussions on how to regulate social media in Arab countries, contributing to enhanced protection for youth and teenagers.

In conclusion, these rulings represent an important step towards holding major tech companies accountable for their societal impacts. As awareness of the risks associated with social media use grows, we may witness legal and regulatory changes aimed at protecting users, particularly the most vulnerable groups.