Microsoft's recent decision to classify its AI assistant Copilot as a tool for "entertainment only" has generated significant controversy. This classification is seen as a legal shield to protect the company from liability for errors that may arise during the use of the tool.

The new update in the terms of use for Copilot, published by PC Mag, clearly states that Copilot is intended for entertainment purposes only, indicating the possibility of errors and advising against relying on it as a trustworthy source for important advice. This update emphasizes that the use of Copilot is at the user's own risk.

Details of the Announcement

This move comes at a time when legal pressures on technology companies are increasing due to the use of artificial intelligence. Reports suggest that this type of liability disclaimer resembles the warnings often found in television programs featuring spiritual mediums, raising questions about Microsoft's seriousness in providing an effective tool for users.

Moreover, experts point out that classifying Copilot as an entertainment tool could have serious legal implications, as it may allow the company to disavow any responsibility related to intellectual property or copyright violations that may arise from the use of the tool.

Background & Context

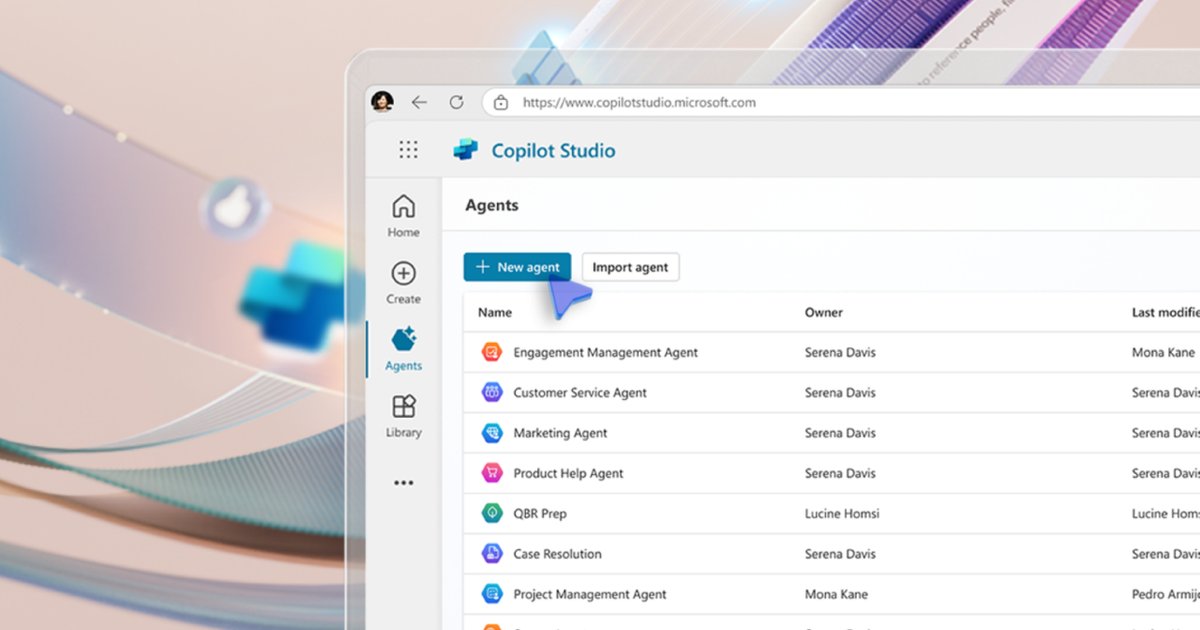

Through this step, Microsoft aims to shift the burden of verifying information onto the end user, making it legally challenging for users to claim damages resulting from using Copilot for serious tasks. This approach is part of a broader strategy by the company to promote the use of artificial intelligence in business, despite the contradiction between marketing messages and legal realities.

These updates primarily target users of the free versions of Copilot, while subscribers to the Microsoft 365 Copilot package are subject to different commercial agreements that provide them with higher levels of legal commitment. Some view this as an indirect way to push professional users towards paid subscriptions.

Impact & Consequences

Reports indicate that this move represents a proactive response from Microsoft to an anticipated wave of class-action lawsuits in 2026. Once users agree that Copilot is for "entertainment only," it becomes legally difficult for them to seek compensation for damages resulting from using the tool in critical tasks.

This shift may raise concerns among users who rely on Copilot for drafting contracts or coding, as they do so without any legal protection from the company if costly errors occur. Consequently, Microsoft may have positioned itself in a way that allows it to evade responsibility should any issues arise.

Regional Significance

As the use of artificial intelligence increases in the Arab world, Microsoft's decision may influence how companies and individuals interact with AI tools. With the growing reliance on this technology, it becomes essential for users to have legal awareness regarding potential risks.

In conclusion, the classification of Copilot as an entertainment tool is a controversial step that could impact user trust in AI tools, highlighting the need for clear legislation to protect user rights and ensure the safe use of this technology.