The European Union has issued a warning to Meta, the parent company of Facebook and Instagram, emphasizing the need for more effective measures to prevent children under the age of thirteen from accessing its platforms. This warning is part of the EU's ongoing efforts to protect children from potential online dangers, amid rising concerns about the impact of social media on the mental health of children and teenagers.

This warning comes at a time when major tech companies are facing increasing criticism regarding their handling of user data, especially for sensitive age groups like children. EU officials have pointed out that Meta has not done enough to ensure that children are not exposed to inappropriate content, thereby putting them at multiple risks.

Event Details

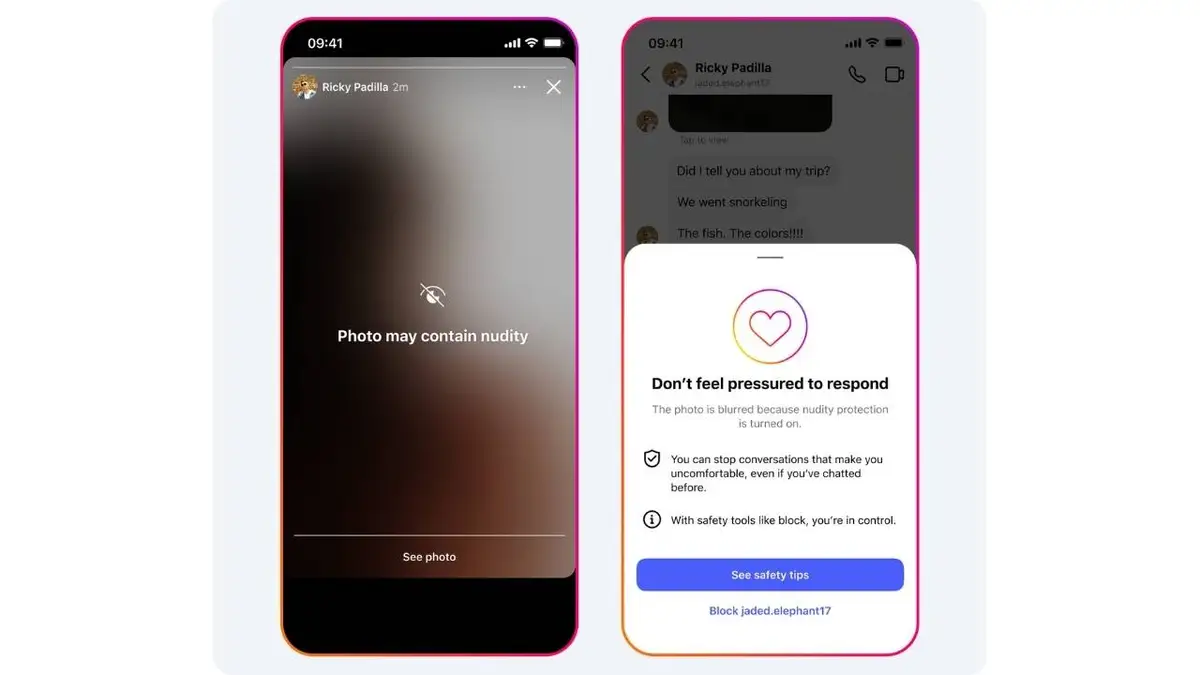

In recent years, social media platforms have seen a significant increase in users from younger age groups, raising concerns among governments and regulatory bodies. Studies have shown that children who use social media at an early age may face psychological and behavioral issues. Therefore, the EU is demanding that Meta enhance its age verification systems and implement stricter policies to prevent children from accessing inappropriate content.

It has also been noted that Meta could face penalties if it does not take effective steps to address these issues. In this context, the EU has stressed the importance of cooperation between governments and companies to ensure a safe online environment for children.

Background & Context

Historically, major tech companies have faced increasing pressure from governments worldwide to ensure the safety of their users, particularly children. In 2018, the EU enacted the General Data Protection Regulation (GDPR), aimed at protecting individuals' privacy, including that of children. However, significant challenges remain in effectively implementing these laws, necessitating further efforts from companies.

Meta is one of the largest companies in this field and has faced numerous criticisms regarding its management of data and content. As public awareness of potential risks grows, the pressure on these companies has never been greater.

Impact & Consequences

If Meta does not respond to the EU's demands, it could face severe consequences, including substantial financial penalties, as well as a loss of trust from users. Furthermore, failing to take effective action could exacerbate issues related to children's mental health, negatively impacting society as a whole.

On the other hand, this pressure may lead to improved policies from other companies regarding child protection, contributing to a safer online environment. These steps could mark the beginning of a significant shift in how tech companies manage user data, especially for younger age groups.

Regional Significance

In the Arab world, the use of social media among youth and children has increased, raising similar concerns about their safety. Arab governments have begun to recognize the importance of protecting children online and may follow similar steps to those taken by the EU. This could lead to the strengthening of local legislation aimed at protecting children from digital risks.

In conclusion, this warning from the EU serves as a call to all companies, including those operating in the Arab world, to adopt safer and more effective policies to protect children online.